Microsoft has been releasing some select pieces of information about developing against Windows 10. It starts simply with the idea of Windows Core, which is the refactored Common Core of Windows (unified API). This gives developers of universal apps things like a single File I/O stack; a consistent API for accessing HttpClient, etc.

This is a process that has been many years in the making, it started with a move to a consistent UI that could be seen across Xbox, Windows and phones, and almost without fanfare we find out that the kernels have been shared across multiple devices. Last year we were provided with a really a rough approximation of a universal development environment, but upon closer inspection it was simply Visual Studio providing two related projects (Windows and Windows phone) and thus two binaries for deployment.

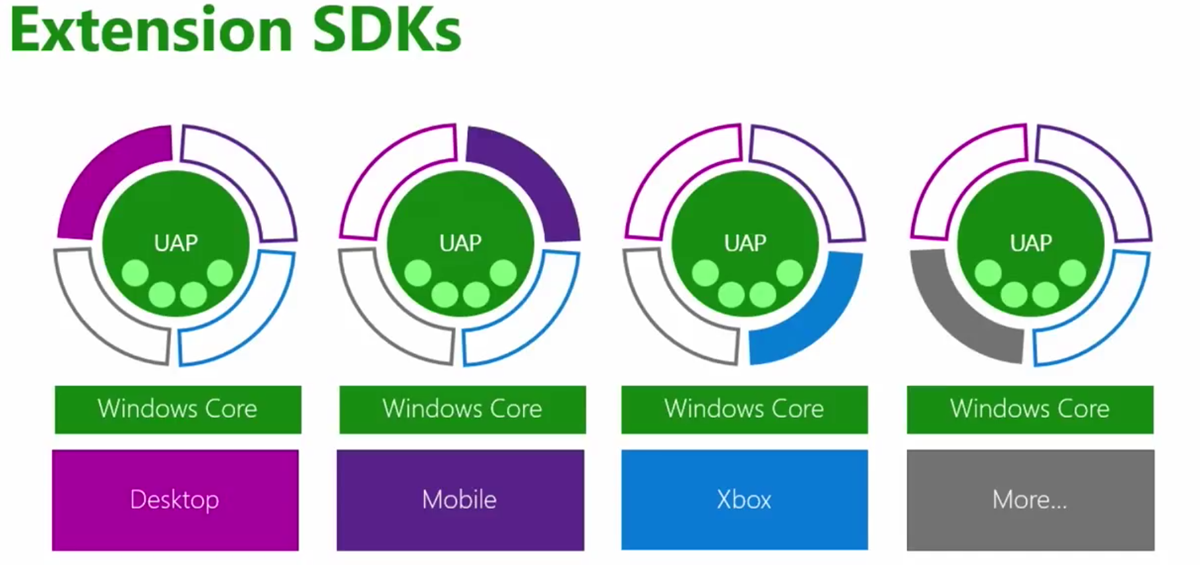

Windows 10 brings the whole story to completion with the introduction of the Universal App Platform (UAP).

Windows App Development

When we we start writing apps to target Windows 10 devices (and that could PC, Mobile, IoT, Xbox, Surface Hub and HoloLens) you are developing against a set of contracts that are guaranteed to work against compatible Windows 10 devices, this promotes the following abilities:

- One package

- One binary

- One API surface across Windows devices

- One platform for both your apps and Microsoft apps

Light Up is the new Windows catch phrase for the process of feature detection and discovery. This deliberate developer exercise is what will allow your single package to deploy to any Windows device but as the responsible developer you will be asked to first detect the features that the device supports. At design time you will be able to explicitly select the Universal App Extension SDK you want to support, presently this includes Windows.Desktop, Windows.Mobile and Windows.Xbox (I expect as more device families are released the extension list will grow).

Windows Core exists on each device type, giving you access to the UAP, stubs/shims exist on other devices so that your mobile based app does not blow up when running in a Xbox context (for example). The following are the main APIs to help you test the capabilities supported (under Windows.Foundation.Metadata.ApiInformation namespace):

- IsApiContractPresent

- IsEnumNamdValuePresent

- IsEventPresent

- IsMethodPresent

- IsPropertyPresent

- IsReadOnlyPropertyPresent

- IsTypePresent

- IsWriteablePropertyPresent

As Window/Windows phone developer on 8.1 you are probably already aware of these compilation directives:

- WINDOWS_PHONE_APP

- WINDOWS_APP

This is a basic example of we would have made a Windows phone app useable within a Windows context.

#if WINDOWS_PHONE_APP Windows.Phone.UI.Input.HardwareButtons,BackPressed += this.HardwareButtons_BackPressed #endif

In general every time you include a compiler directive it results in two or more binaries, but by using the ApiInformation namespace you can do the following kind of detection and produce a single assembly for deployment:

string nspace = "Windows.Phone.UI.Input.HardwareButtons"

if(Windows.Foundation.Metadata.ApiInformation.IsTypePresent(nspace))

{

Windows.Phone.Ui.Input.HardwareButtons.BackPressed += Back_BackPressed;

}

Apparently Microsoft is eating its own dog food and a bunch of apps being developed internally are using the exact same process, apps like:- Office (touch first), Skype, Camera, Photo, People Hub, etc.

Adaptive UX

If you have done any recent web development and design then the task of dealing with a varying screen sizes is one that you are familiar with. Microsoft has apparently spent some non-trivial amount of time fleshing out how developers can easily tackle the problem with Adaptive User Interface. Based on a set of adaptive controls it quickly enables an experience tailored to the device, the base building blocks appear to be:

- Ensure large and small screen appropriateness

- Deliver polished and attractive user experience

- Support mouse, keyboard, touch, etc

Just like the CSS and jQuery counterparts a trigger is setup in xaml, this fires when the layout goes within a certain range (called Adaptive Trigger). The Adaptive Trigger has something called Setters which have the ability to update the state of the layout to be more appropriate to the new size.

The problem of screen sizes is just the start of course, and so each type of device will also come with a set of optimized Shells for each device (e.g. Continuum), examples include:- Mouse, Keyboard, Touch, One hand operations (for phones), and the Xbox Controller. Following from these traditional input mechanism are natural User Inputs - Speech, Gesture, and Facial Recognition.

You can plugin to whatever input metaphor works for you!

Hosted Web apps

Hosted Web apps are the final piece to the puzzle and may be an opportunity to positively impact the app gap. Conceptually it allows you to present a website (hopefully well formatted to a variety of devices) as an app within the App store. All the flexibility and reach of the web, with the power of an App.

You get to leverage the discoverability of the App store, its Shell integration, make it Pinnable, and also integrate Cortana! Leverage your current web investments and developer workflow (publish updates to the web directly) and you still get the options to access the Universal APIs like Notifications, Camera, Contact List, and Calendar.

This is all achieved via a manifest which tells the Windows Store which APIs you want your web site to be able to use (Windows Store install process will ask the users permission). The developer will use JavaScript to make calls outside of the Web Sandbox, this is once again made possible by feature detection to verify you are in a Hosted Web app. If you are in a Hosted Web app then you have access to the universal API plugin and can start making calls against the API directly from JavaScript. I have been a web guy for a while so this is exciting stuff!

Develop Windows Apps wherever you like!

Comments are closed.